Microscopy has undergone a revolution in the last two decades. Optical microscopy techniques have made enormous advances in signal detection, acquisition speed, spatial resolution, optical illumination, and achieved novel capabilities, such as 3D spatial and temporal reconstruction of living biological systems or automation of processes for image acquisition and processing. These achievements have not only opened perspectives inconceivable so far in biology, medicine and materials science, but have also transformed microscopy into a highly quantitative and data-analytical technology.

Consequently, the extremely large throughput of microscopy techniques has created new complex challenges, related to the storage of massive data sets over long periods of time, slow data transmission, as well as high computing capacity required by image acquisition and processing. For instance, light-sheet or 3D electron microscopy experiments typically generate tens or hundreds of terabytes of multidimensional image data per day, corresponding to ten times more than what was produced five years ago.

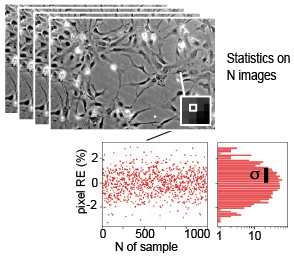

Image compression is the unique solution for durable and effective big data archiving and handling in microscopy. Nonetheless, efficient image compression algorithms (such as JPEG) are lossy, support only a limited dynamic range, generate artefacts and are therefore not suitable for analysis, especially for Deep Learning where a small artefact in input data may result in erroneous analysis results.

The goal of the project is to adapt and validate a compressed image format suitable to microscopy workflows, that is simultaneously characterized by high compression factors and by the preservation of the raw quality of the original data.

Project partner(s)

Project leader - team

Jérôme Extermann

(HEPIA),

Enrico Pomarico

(HEPIA),

Cédric Schmidt

(HEPIA),

Gabriel Giardina (HEPIA)

,

Mathieu Di Franco

(HEPIA),

Yoan Neuenschwander

(HEPIA),

Adrien Roux

(HEPIA)